Editor’s note: I reached out to Sarah for comment on my STPA Deep Dive editorial, and unfortunately I used the wrong email address and didn’t provide enough time for a response. But when she finally did see my inquiry, Sarah was kind enough to respond and share her slides.

Mark,

First off, sorry for the delay in responding! I hope this can still be of use. I am in school right now, so I’m not checking this email on a regular basis.

I agree that STPA can’t be self taught, and that definitely is an area where the STPA community can continue to evolve. Prof Leveson conducts an annual free STPA workshop that can be attended both in person and virtually. Anyone who is interested in learning more definitely should check her website and consider attending next year.

One of the reasons STPA takes a while right now is because it’s not used in the design phase. Therefore testers have to take a lot of time to develop the model and then conduct the analysis. If design engineers incorporated STPA into their processes and it was handed to testers all testers would need to do is add the test specific aspects to the model and run the resulting delta analysis. While STPA takes longer on the test side compared to traditional methods, it is a lot faster on the design side and because it focuses on system behavior, it can be readily adapted to a flight test program. Testers seem to get the value of STPA, but we haven’t had as much success pushing the rope on the design side. I think if we could get designers on board this would be a game changer.

Regarding probabilities: it helps to think about what the purpose of using probabilities in a safety analysis is. I see probability in a safety analysis as twofold: two determine what the likelihood of bad outcomes are so the test team can focus on the most likely outcomes & to communicate the risk to approval authorities. Are there other ways to both determine where to focus and to communicate? I think STPA can provide avenues for both. For focusing efforts, you can use both traceability to a hazard that is more severe, and you can use how many scenarios a particular mitigation may solve (I call this low hanging fruit). For communication, I have been in many test programs where we make our best guess at probability (severity is much easier to determine), but we find we realize hazards more than expected due to our initial lack of system behavior knowledge. However, because our approval process is based on severity and probability, we may have done inadequate safety planning & approval. Also, because we use some number, 10^-9 as an example, it may lead people to believe there was more analytical rigor to the probability analysis. Lastly, the functional diagrams are a great way to communicate function and potential risks to a safety board or approval authority. It ensure everyone has the same mental model of the system under test.

To be clear: I am not anti probabilities. However, there are lot of system behaviors that can’t be modeled using statistics that we often try to shoehorn into a probabilistic methodology in a way that could lead us down a bad path. As I believe I mentioned during the talk, I think overall we are very good at safety, particularly from a mechanical reliability perspective. We have an opportunity to continue to use those methods, but also look at system behavior in a different way to get after the challenges we often face now with complex software, system interactions, and human/machine interactions.

Please shout if you’d like to talk about any points I’ve made.

Sarah

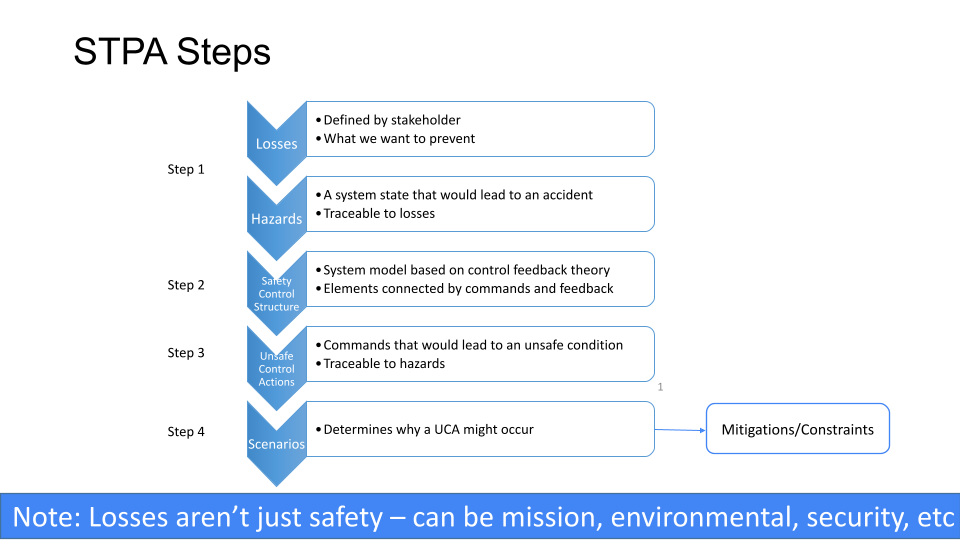

Download Systems-Theoretic Process Analysis for Flight Test Safety.

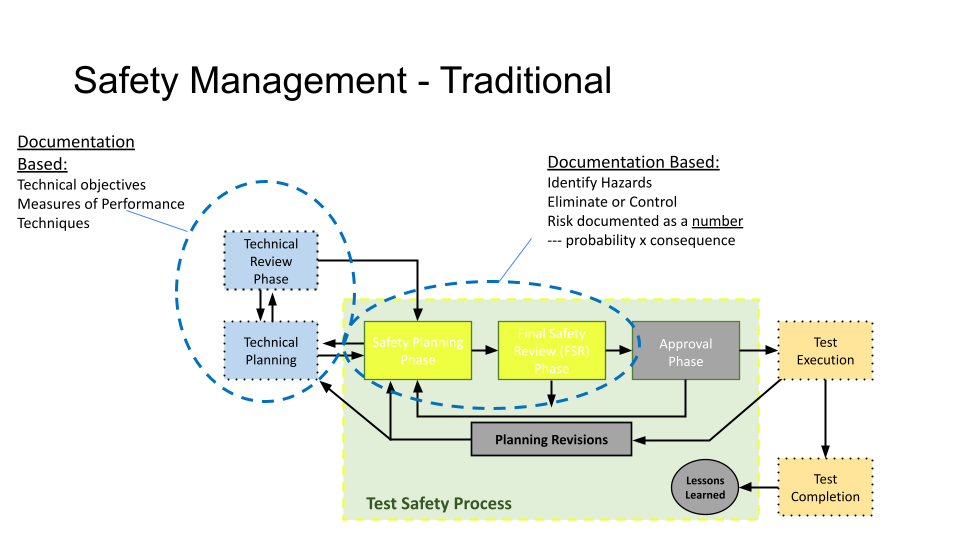

An example from the slide presentation showing Traditional Safety Management.